[针对app,resin,tomcat日志分割脚本]\

[appname:填写app(包含resin/tomcat)的名字;]

[key:过滤日志关键字,避免删除其他不必要的文件;\

]

[cleanday:日志保存的周期,缺省保存30天;]

[cleanlog:删除日志的记录保存的目录]

[核心命令:

find命令去查找日志目录下含关键字的日志文件,然后利用for循环去删除\$cleanday之前的日志文件]

#!/bin/bash

today=$(date +%Y_%m%d_%H%M)

#appname=tomcat

#logdir=/data/log/tomcat

#key=log

#key=catalina.out

appname=storm

logdir=/data/log/$appname

key=$appname

cleanday=30

cleanlog=/data/log/clean

filelist=$(find $logdir -type f -mtime +$cleanday |grep "$key" )

[[ -d $cleanlog ]] || mkdir -p $cleanlog

echo "[Date:`date`]"

if [[ -z $filelist ]];then

echo "$appname logfile have't the $cleanday days ago file! ---exit!"

echo "[ Date:`date` ] $appname logfile have't the $cleanday days ago file! ---exit!" >> $cleanlog/delete.log

exit

fi

echo "Starting clean up the $appname is logfile for $cleanday days ago..."

echo "Need to clean up the following directory:"

echo "$filelist"

echo "[ Date:`date` ]" >> $cleanlog/delete.log

echo "Starting clean up the $appname is logfile..." >> $cleanlog/delete.log

echo "Need to clean up the following directory:" >> $cleanlog/delete.log

echo "$filelist" >> $cleanlog/delete.log

for i in $filelist

do

rm -f $i

#echo $i > /dev/null 2>&1

done

filelist2=$(find $logdir -type f -mtime +$cleanday |grep "$key")

if [[ -z $filelist2 ]];then

echo "$appname logfile have cleanup ---successful!"

echo "$appname logfile have cleanup ---successful!" >> $cleanlog/delete.log

else

echo "$appname logfile have cleanup ---faild!"

echo "$appname logfile faild file:"

echo "$filelist2"

echo "$appname logfile have cleanup ---faild!" >> $cleanlog/delete.log

echo "$appname logfile faild file:" >> $cleanlog/delete.log

echo "$filelist2" >> $cleanlog/delete.log

fi[针对nginx日志分割脚本:]

#!/bin/bash

path=/data/log/nginx

nginx=` cat /usr/local/nginx/logs/nginx.pid `

mv $path/access.log $path/access_`date +%Y%m%d`.log

kill -USR1 $nginx #使用USR1参数通知Nginx进程切换日志文[]

#!/bin/bash

#Date create 2013-10-23

#Author GaoMingHuang

log_path=/data/log/nginx/access.log

log_dir=/data/log/Analysis

domain="crm.baoxian.in"

email="530035210@qq.com"

maketime=`date +%Y-%m-%d" "%H":"%M`

logdate=`date -d "yesterday" +%Y-%m-%d`

dayone=`date +%d/%b/%Y`

now=`date +%Y_%m%d_%H%M`

date_start=$(date +%s)

total_visit=`wc -l ${log_path} | awk '{print $1}'`

total_bandwidth=`awk -v total=0 '{total+=$10}END{print total/1024/1024}' ${log_path}`

total_unique=`awk '{ip[$1]++}END{print asort(ip)}' ${log_path}`

ip_pv=`awk '{ip[$1]++}END{for (k in ip){print ip[k],k}}' ${log_path} | sort -rn |head -20`

url_num=`awk '{url[$7]++}END{for (k in url){print url[k],k}}' ${log_path} | sort -rn | head -20`

#referer=`awk -v domain=$domain '$11 !~ /http:\/\/[^/]*'"$domain"'/{url[$11]++}END{for (k in url){print url[k],k}}' ${log_path} | sort -rn `

notfound=`awk '$9 == 404 {url[$7]++}END{for (k in url){print url[k],k}}' ${log_path} | sort -rn | head -20`

#spider=`awk -F'"' '$6 ~ /Baiduspider/ {spider["baiduspider"]++} $6 ~ /Googlebot/ {spider["googlebot"]++}END{for (k in spider){print k,spider[k]}}' ${log_path}`

#search=`awk -F'"' '$4 ~ /http:\/\/www\.baidu\.com/ {search["baidu_search"]++} $4 ~ /http:\/\/www\.google\.com/ {search["google_search"]++}END{for (k in search){print k,search[k]}}' ${log_path}`

#echo -e "概况\n报告生成时间:${maketime}\n总访问量:${total_visit}\n总带宽:${total_bandwidth}M\n独立访客:${total_unique}\n\n访问IP统计\n${ip_pv}\n\n访问url(统计前20个页面)\n${url_num}\n\n来源页面统计\n${referer}\n\n404统计(统计前20个页面)\n${notfound}\n\n蜘蛛统计\n${spider}\n\n搜索引擎来源统计\n${search}"

#统计该ip在干些什么

max_ip=`awk '{ip[$1]++}END{for (k in ip){print ip[k],k}}' ${log_path} | sort -rn |head -1 |awk '{print $2}'`

ip_havi=`cat $log_path | grep "$max_ip" | awk '{print $7}'| sort |uniq -c |sort -nr |head -20`

#统计当天哪个时间段访问量最多

time_stats=`awk '{print $4}' ${log_path} | grep "$dayone" |cut -c 14-18 |sort|uniq -c|sort -nr |head -n 10`

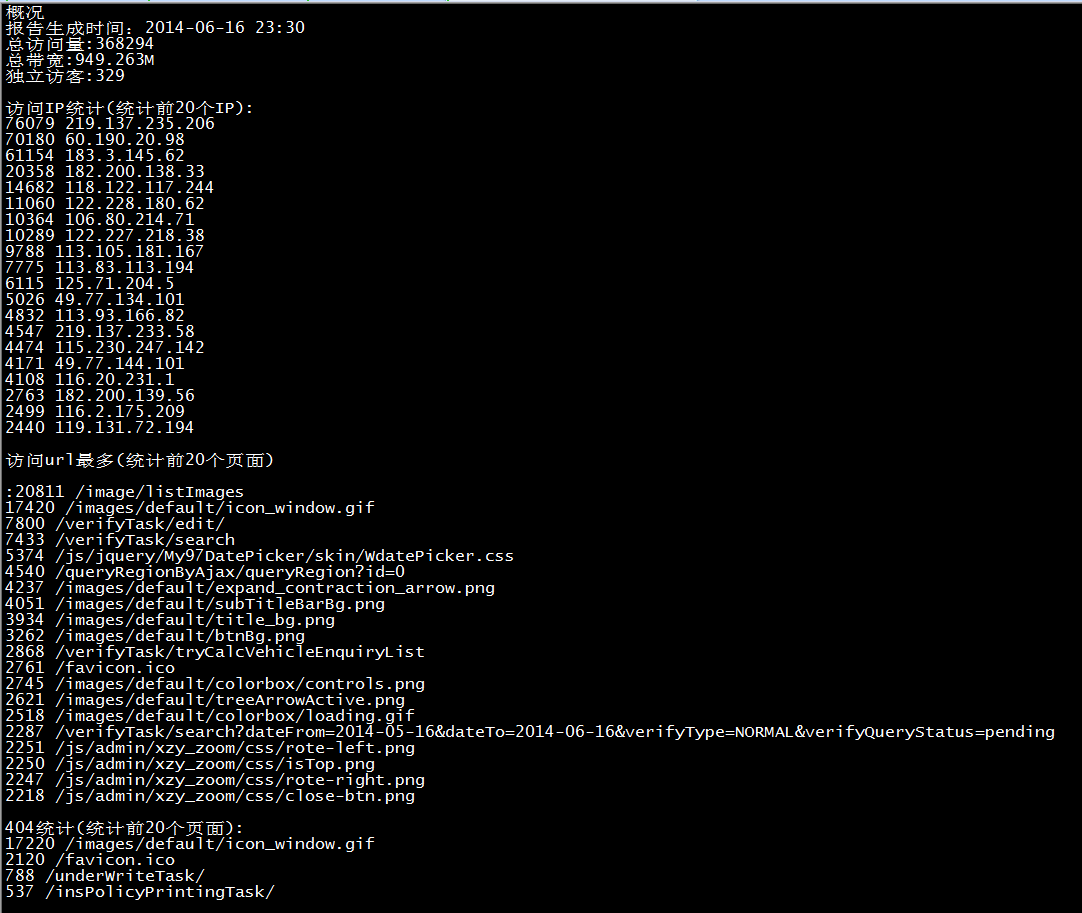

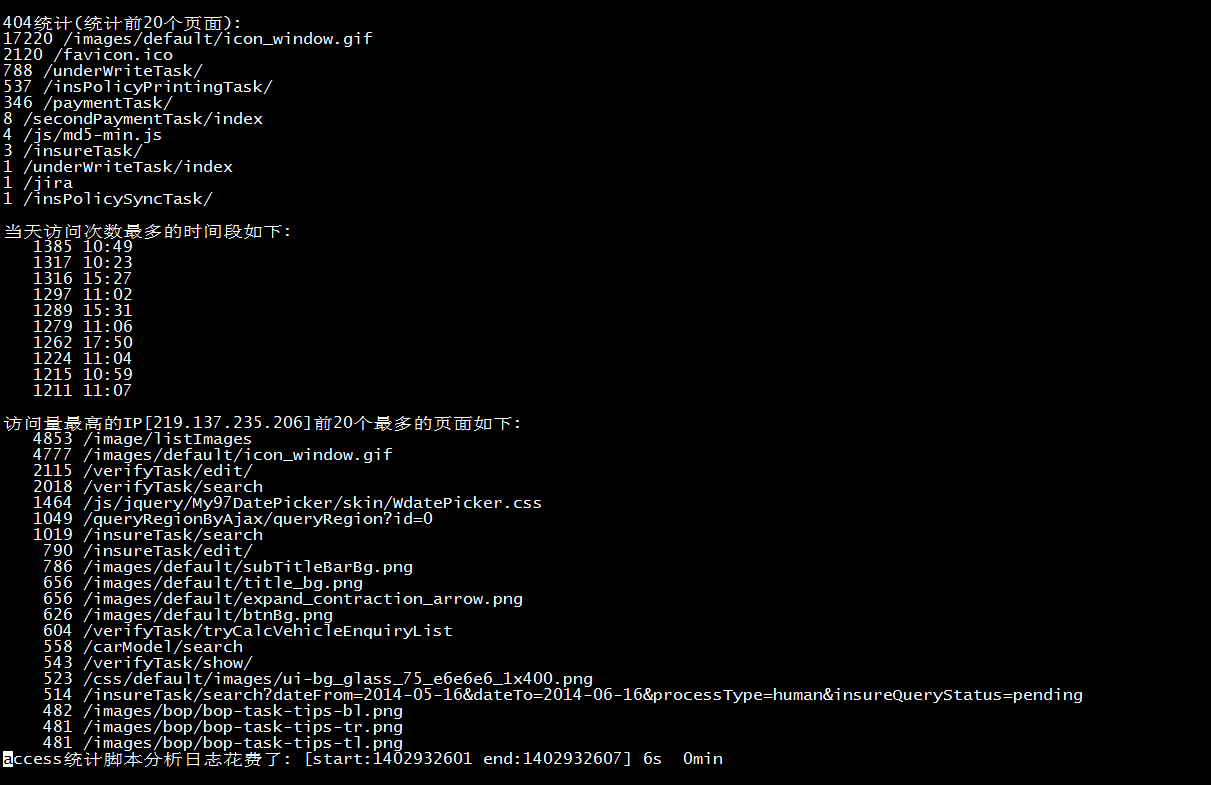

echo -e "概况\n报告生成时间:${maketime}\n总访问量:${total_visit}\n总带宽:${total_bandwidth}M\n独立访客:${total_unique}\n\n访问IP统计(统计前20个IP):\n${ip_pv}\n\n访问url最多(统计前20个页面)\n:${url_num}\n\n404统计(统计前20个页面):\n${notfound}\n\n当天访问次数最多的时间段如下:\n${time_stats}\n\n访问量最高的IP[${max_ip}]前20个最多的页面如下:\n${ip_havi} "

[[ -d $log_dir ]] || mkdir -p $log_dir

echo -e "概况\n报告生成时间:${maketime}\n总访问量:${total_visit}\n总带宽:${total_bandwidth}M\n独立访客:${total_unique}\n\n访问IP统计(统计前20个IP):\n${ip_pv}\n\n访问url最多(统计前20个页面)

\n:${url_num}\n\n404统计(统计前20个页面):\n${notfound}\n\n当天访问次数最多的时间段如下:\n${time_stats}\n\n访问量最高的IP[${max_ip}]前20个最多的页面如下:\n${ip_havi} " > $log_dir/analysis_access$now.log

date_end=$(date +%s)

time_take=$(($date_end-$date_start))

take_time=$(($time_take/60))

echo "access统计脚本分析日志花费了: [start:$date_start end:$date_end] $time_take"s" $take_time"min""

echo "access统计脚本分析日志花费了: [start:$date_start end:$date_end] $time_take"s" $take_time"min"" >> $log_dir/analysis_access$now.log[针对nginx日志分析脚本结果展现如下:]

| 文章作者 | 明哥 |

| 文章地址 | https://www.pvcreate.com/index.php/archives/10/ |

| 创建时间 | 2014-06-17 |

| 关注订阅 | 微信订阅号 |

| 开源项目 | https://gitee.com/lookingdreamer |

| 工具市场 | https://gitee.com/lookingdreamer/SPPPOTools |